Collective Perception – Information Fusion and Visualization

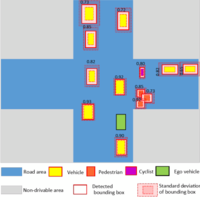

Autonomous Vehicles (AV) are expected to drive safer and more efficiently than humans. They have various on-board sensors collecting data about the environment. Currently, AV can hardly reach level 5 of the SAE J3016 standard, with which they should be able to drive under all conditions. Occlusions caused by dense traffic or tall buildings, limited observation range of sensors, adverse weather and sensor noise make the perception system less efficient.

To address the above mentioned problems, this doctoral project aims to develop a more reliable and safe collective perception system where Connected Autonomous Vehicles (CAV) share their perception information. Previous studies examined which processing step should be used to share data and how to fuse them. Some works use detected objects while others use raw data and feature data. They have shown that raw and feature data fusion performs better than objects fusion however with a high cost of the communication load.

In this doctoral project, the collective multi-agent perception is further enhanced within a neural network which aims to find better fusion mode so that the perception system can gain better performance with an efficient Collective Perception Messages (CPM) sharing strategy. The CPMs are optimized to contain the most informative and least redundant data. Finally, the concept is evaluated using a simulation system with different traffic modes, traffic participants and sensor configurations. SUMO traffic simulation creates the traffic flow and CARLA vehicle simulator renders the 3D city scenes and generates the sensor data.

Researcher: Yunshuang Yuan, M. Sc.